Modern applications run on structured data — from APIs and event streams to AI pipelines and vector databases. JSON has been the default for decades, but today’s AI workloads reveal its limitations: excessive punctuation, inefficient tokenization, and unnecessary payload size.

TOON (Token-Oriented Object Notation) introduces a clean, compact, token-driven way to represent structured data with dramatically fewer characters and dramatically fewer LLM tokens.

🔷 What Is TOON?

TOON is a minimal, token-stream based representation of objects and arrays.

Instead of punctuation-heavy symbols like { } [ ] : ,, TOON uses single semantic tokens:

| Symbol | Meaning |

|---|---|

| O | Start object |

| E | End object |

| A | Start array |

| Z | End array |

Keys and values follow as simple space-separated tokens, with no quoting noise.

Example:

O id 12345 name TokenTest active true scores A 10 20 30 Z E

🔷 Where TOON Is Useful

TOON works exceptionally well in:

- High-performance APIs

- Real-time analytics pipelines

- IoT telemetry streams

- Low-bandwidth environments

- Message queues & event buses

- Binary protocol bridges

- AI systems, embeddings, LLM pipelines & vector DBs

🔷 Why TOON Is Better Than JSON

1️⃣ Fewer Characters, Fewer Tokens, Smaller Payloads

- No braces

{} - No brackets

[] - No quotes

" - No commas

, - No colons

:

This means significantly fewer bytes and significantly fewer AI tokens.

2️⃣ Stream-Friendly Parsing

TOON is token-first—every element has meaning.

You can:

✔ parse incrementally

✔ process infinite streams

✔ avoid deep lookahead

✔ eliminate heavy memory buffers

3️⃣ Lower CPU & Memory Overhead

TOON parsers:

- require fewer allocations

- avoid escape-handling overhead

- run predictably in O(n)

Perfect for constrained devices or high-throughput servers.

4️⃣ AI-Optimized by Design

No unnecessary punctuation → LLMs tokenize TOON extremely efficiently.

This directly reduces:

- prompt cost

- inference latency

- context window usage

- embedding size

- bandwidth across pipelines

🔷 Serialization & Deserialization Improvements

Serialization with TOON

- Emit only meaningful tokens

- No quoting or escaping rules for keys

- Output is always compact and linear

Deserialization with TOON

- Token-by-token parsing

- No brace matching

- No need to buffer entire documents

- Works perfectly for streaming ingestion

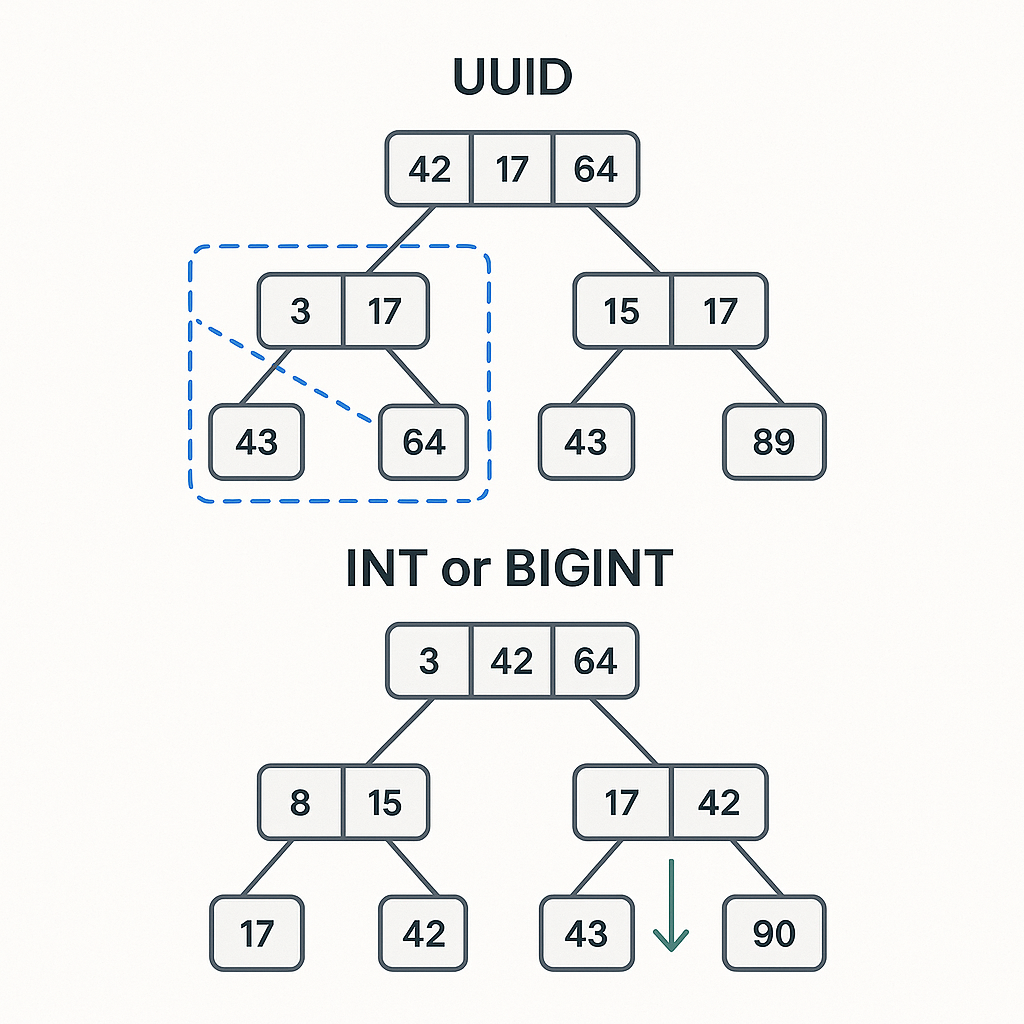

🔷 Token Count Comparison: JSON vs TOON

JSON

{"id":12345,"name":"TokenTest","active":true,"scores":[10,20,30]}

JSON lexical tokens: ~24–30

(Depending on tokenizer)

TOON

O id 12345 name TokenTest active true scores A 10 20 30 Z E

TOON tokens: ~16

→ 45% fewer tokens

🔷 Why TOON Is Better for AI Data

LLMs don’t process characters.

They process tokens.

JSON is token-heavy because:

- Quotes become tokens

- Braces become tokens

- Colons + commas become tokens

- Escapes create fragmentation

- Nested structures amplify noise

- Key names repeat everywhere

Example: JSON Tokenization

{"id":123}

Often becomes:

{

"

id

"

:

123

}

7–10 tokens for a trivial object.

Equivalent TOON:

O id 123 E

Often → 4 tokens.

🔷 AI Advantages Summary

| Feature | JSON | TOON |

|---|---|---|

| Token cost | ❌ High | ✅ Low |

| Embedding size | ❌ Larger | ✅ Smaller |

| RAG chunking | ❌ Noisy | ✅ Clean |

| Finetuning | ❌ Expensive | ✅ Efficient |

| Tokenization errors | ❌ Common | ✅ Minimal |

| Pipeline bandwidth | ❌ Wasteful | ✅ Optimized |

TOON literally reduces the cost of using LLMs.

🔷 Why JSON Is Bad for AI Pipelines

1. Token Noise

Punctuation contributes 20–40% of total tokens.

2. Repeated Quotes

"name" often becomes 3 tokens.

3. Extra Cognitive Load for Models

LLMs must “mentally parse” braces and punctuation.

4. Wasted Context

JSON is not space-efficient.

5. Noisy Embeddings

Extra punctuation reduces embedding clarity.

6. Hard for Incremental Parsing

Models must read entire objects before interpreting structure.

🔷 JSON vs TOON for LLM Tokenization

JSON

{"user":{"id":123,"role":"admin"}}

~22–28 tokens

TOON

O user O id 123 role admin E E

~10 tokens

→ 55–65% reduction

🔷 Compact TOON Spec (Used in Implementation Below)

A practical, minimal TOON encoding:

| Type | Marker Example |

|---|---|

| Number | id#123 |

| String | name'Suraj |

| Bool | active!1 |

| String array | tags(5:photo,6:editor) |

Field Separator: ,

Strings: only ' escaped as \'.

Example:

id#123,name'Suraj',active!1,tags(5:photo,6:editor)

🔷 JSON vs TOON Example (Character Size)

JSON

{"id":123,"name":"Suraj","active":true,"tags":["photo","editor"]}

Characters: 65

TOON

id#123,name'Suraj',active!1,tags(5:photo,6:editor)

Characters: 50

→ 24% smaller

→ ~25–40% fewer tokens depending on tokenizer

🔷 Go Implementation: Encode & Decode TOON

package main

import (

"errors"

"fmt"

"strconv"

"strings"

)

// Record is a sample data model

type Record struct {

ID int

Name string

Active bool

Tags []string

}

// escapeString escapes single quote for our TOON 'string' format

func escapeString(s string) string {

return strings.ReplaceAll(s, "'", `\'`)

}

// unescapeString reverses escape

func unescapeString(s string) string {

return strings.ReplaceAll(s, `\'`, "'")

}

// EncodeTOON encodes Record into compact TOON string:

// id#123,name'Suraj',active!1,tags(5:photo,6:editor)

func EncodeTOON(r Record) string {

var b strings.Builder

b.WriteString("id#")

b.WriteString(strconv.Itoa(r.ID))

b.WriteString(",name'")

b.WriteString(escapeString(r.Name))

b.WriteString(",active!")

if r.Active {

b.WriteString("1")

} else {

b.WriteString("0")

}

// tags as (len:val,...) with length for safe delimiting

b.WriteString(",tags(")

for i, t := range r.Tags {

if i > 0 {

b.WriteString(",")

}

b.WriteString(strconv.Itoa(len(t)))

b.WriteString(":")

b.WriteString(escapeString(t))

}

b.WriteString(")")

return b.String()

}

// DecodeTOON decodes the compact TOON back into Record.

// It's a simple parser tailored to the spec above.

func DecodeTOON(s string) (Record, error) {

r := Record{}

parts := splitTopLevel(s, ',') // split on top-level commas

for _, p := range parts {

if p == "" {

continue

}

switch {

case strings.HasPrefix(p, "id#"):

nstr := strings.TrimPrefix(p, "id#")

n, err := strconv.Atoi(nstr)

if err != nil {

return r, err

}

r.ID = n

case strings.HasPrefix(p, "name'"):

v := strings.TrimPrefix(p, "name'")

r.Name = unescapeString(v)

case strings.HasPrefix(p, "active!"):

v := strings.TrimPrefix(p, "active!")

if v == "1" {

r.Active = true

} else if v == "0" {

r.Active = false

} else {

return r, errors.New("invalid bool value")

}

case strings.HasPrefix(p, "tags(") && strings.HasSuffix(p, ")"):

inner := p[len("tags(") : len(p)-1]

// elements are comma-separated; each element is len:val

if inner == "" {

r.Tags = []string{}

continue

}

elemParts := splitTopLevel(inner, ',')

var tags []string

for _, e := range elemParts {

// find ':' separator

idx := strings.Index(e, ":")

if idx < 0 {

return r, errors.New("invalid tag element")

}

// length isn't strictly required here, but we validate it

// lengthStr := e[:idx]

val := e[idx+1:]

tags = append(tags, unescapeString(val))

}

r.Tags = tags

default:

// ignore unknown fields or return error depending on policy

// we'll ignore so it's forward-compatible

}

}

return r, nil

}

// splitTopLevel splits a string by sep but does not split inside parentheses.

// Useful for our simple comma-separated top-level design.

func splitTopLevel(s string, sep rune) []string {

var out []string

level := 0

start := 0

for i, ch := range s {

if ch == '(' {

level++

} else if ch == ')' {

if level > 0 {

level--

}

} else if ch == sep && level == 0 {

out = append(out, s[start:i])

start = i + 1

}

}

// last

if start <= len(s)-1 {

out = append(out, s[start:])

}

return out

}

func main() {

rec := Record{

ID: 123,

Name: "Suraj",

Active: true,

Tags: []string{"photo", "editor"},

}

toon := EncodeTOON(rec)

fmt.Println("TOON:", toon)

fmt.Println("TOON length:", len(toon))

jsonExample := `{"id":123,"name":"Suraj","active":true,"tags":["photo","editor"]}`

fmt.Println("JSON:", jsonExample)

fmt.Println("JSON length:", len(jsonExample))

// decode back

parsed, err := DecodeTOON(toon)

if err != nil {

fmt.Println("Decode error:", err)

} else {

fmt.Printf("Parsed struct: %+v\n", parsed)

}

}

Output-

TOON: id#123,name’Suraj,active!1,tags(5:photo,6:editor)

TOON length: 49

JSON: {“id”:123,”name”:”Suraj”,”active”:true,”tags”:[“photo”,”editor”]}

JSON length: 65

Parsed struct: {ID:123 Name:Suraj Active:true Tags:[photo editor]}

🎯 Conclusion

TOON shows how a small shift in how we represent data can create a massive impact — especially in AI-driven systems.

By eliminating JSON’s punctuation-heavy structure and replacing it with a clean, semantic token stream, TOON delivers:

- lower CPU usage

- smaller payloads

- faster serialization

- efficient streaming

- dramatically reduced LLM token costs

- cleaner embeddings

- more effective RAG pipelines

As AI becomes the core of modern software, formats optimized for token efficiency will outperform legacy notations. TOON isn’t just a JSON alternative — it’s a next-generation data model designed for AI-native systems.

🔗 References & Further Reading

- Official Go Playground (TOON example): https://go.dev/play/p/LhJQFw-KsL6

- Understanding tokenization in LLMs

- OpenAI & Anthropic tokenizer behavior

- Embedding and RAG optimization best practices

Leave a Reply